目录

一、简介

前面文章已经介绍了selenium库使用,及浏览器提取信息相关方法。参考:python爬虫之selenium库

现在目标要求,用爬虫通过浏览器,搜索关键词,将搜索到的视频信息存储在excel表中。

二、创建excel表格,以及chrome驱动

n = 1word = input('请输入要搜索的关键词:')driver = webdriver.Chrome()wait = WebDriverWait(driver,10)excl = xlwt.Workbook(encoding='utf-8', style_compression=0)sheet = excl.add_sheet('b站视频:'+word, cell_overwrite_ok=True)sheet.write(0, 0, '名称')sheet.write(0, 1, 'up主')sheet.write(0, 2, '播放量')sheet.write(0, 3, '视频时长')sheet.write(0, 4, '链接')sheet.write(0, 5, '发布时间') |

三、创建定义搜索函数

里面有button_next 为跳转下一页的功能,之所有不用By.CLASS_NAME定位。看html代码可知

<button class="vui_button vui_pagenation--btn vui_pagenation--btn-side">下一页</button> |

class名称很长,而且有空格,如果selenium用By.CLASS_NAME定位,有空格会报错:selenium.common.exceptions.NoSuchElementException: Message: no such element

所以这里用By.CSS_SELECTOR方法定位。

def search(): driver.get("https://www.bilibili.com/") input = wait.until(EC.presence_of_element_located((By.CLASS_NAME, 'nav-search-input'))) button = wait.until(EC.element_to_be_clickable((By.CLASS_NAME, 'nav-search-btn'))) input.send_keys(word) button.click() print('开始搜索:'+word) windows = driver.window_handles driver.switch_to.window(windows[-1]) get_source() #第1页跳转第2页 button_next = driver.find_element(By.CSS_SELECTOR,'#i_cecream > div > div:nth-child(2) > div.search-content > div > div > div.flex_center.mt_x50.mb_x50 > div > div > button:nth-child(11)') wait.until(EC.presence_of_element_located((By.CSS_SELECTOR, '#i_cecream > div > div:nth-child(2) > div.search-content > div > div > div.video-list.row > div:nth-child(1) > div > div.bili-video-card__wrap.__scale-wrap'))) button_next.click() get_source() |

四、定义跳转下一页函数

这里有调转下一页函数,那为什么在上面搜索函数也有下一页功能,因为分析代码。

#第2页的CSS_SELECTOR路径#i_cecream > div > div:nth-child(2) > div.search-content > div > div > div.flex_center.mt_x50.mb_x50 > div > div > button:nth-child(11)#后面页面的CSS_SELECTOR路径#i_cecream > div > div:nth-child(2) > div.search-content > div > div > div.flex_center.mt_x50.mb_lg > div > div > button:nth-child(11) |

第1页的CSS_SELECTOR和后面的页面的CSS_SELECTOR的不一样,所以把第1页跳第2页单独加在了上面搜索函数中。

def next_page(): button_next = wait.until(EC.presence_of_element_located((By.CSS_SELECTOR,'#i_cecream > div > div:nth-child(2) > div.search-content > div > div > div.flex_center.mt_x50.mb_lg > div > div > button:nth-child(11)'))) button_next.click() wait.until(EC.presence_of_element_located((By.CSS_SELECTOR, '#i_cecream > div > div:nth-child(2) > div.search-content > div > div > div.video-list.row > div:nth-child(1) > div > div.bili-video-card__wrap.__scale-wrap'))) get_source() |

五、定义获取页面代码函数

上面定义的函数都有get_source()函数,这个函数就是现在需要创建的,用途获取页面代码,传入BeautifulSoup

def get_source(): html = driver.page_source soup = BeautifulSoup(html, 'lxml') save_excl(soup) |

六、获取元素并存到excel表

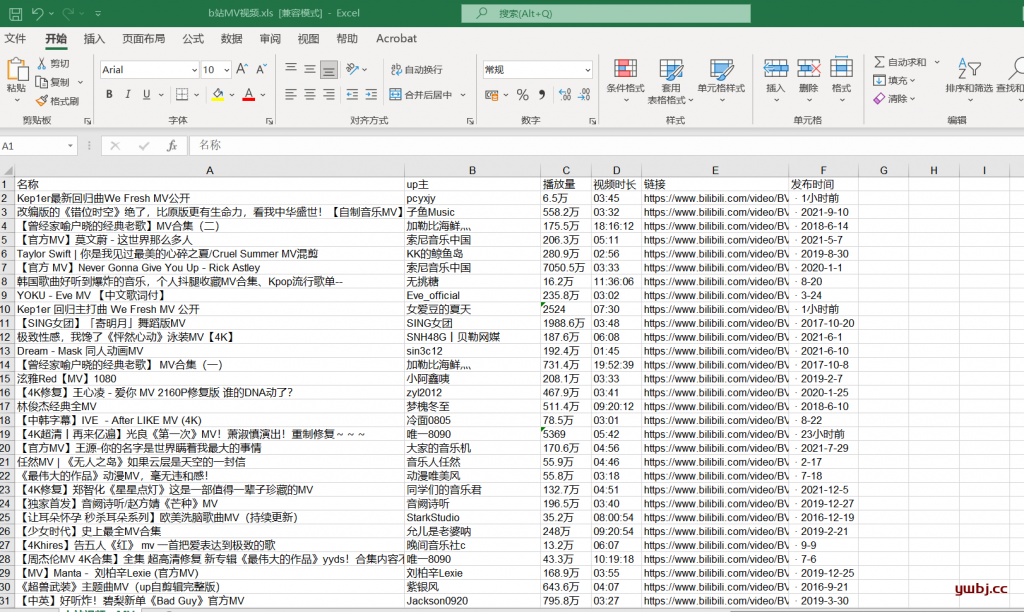

通过BeautifulSoup循环获取页面信息,并存到创建好的excel表中。

def save_excl(soup): list = soup.find(class_='video-list row').find_all(class_="bili-video-card") for item in list: # print(item) video_name = item.find(class_='bili-video-card__info--tit').text video_up = item.find(class_='bili-video-card__info--author').string video_date = item.find(class_='bili-video-card__info--date').string video_play = item.find(class_='bili-video-card__stats--item').text video_times = item.find(class_='bili-video-card__stats__duration').string video_link = item.find('a')['href'].replace('//', 'https://') print(video_name, video_up, video_play, video_times, video_link, video_date) global n sheet.write(n, 0, video_name) sheet.write(n, 1, video_up) sheet.write(n, 2, video_play) sheet.write(n, 3, video_times) sheet.write(n, 4, video_link) sheet.write(n, 5, video_date) n = n +1 |

七、定义main函数,循环获取跳转每一页

这里默认是10页的数据,后面就不获取了,可以自行调整页面数。最后保存表名。

def main(): search() for i in range(1,10): next_page() i = i + 1 driver.close()if __name__ == '__main__': main() excl.save('b站'+word+'视频.xls') |

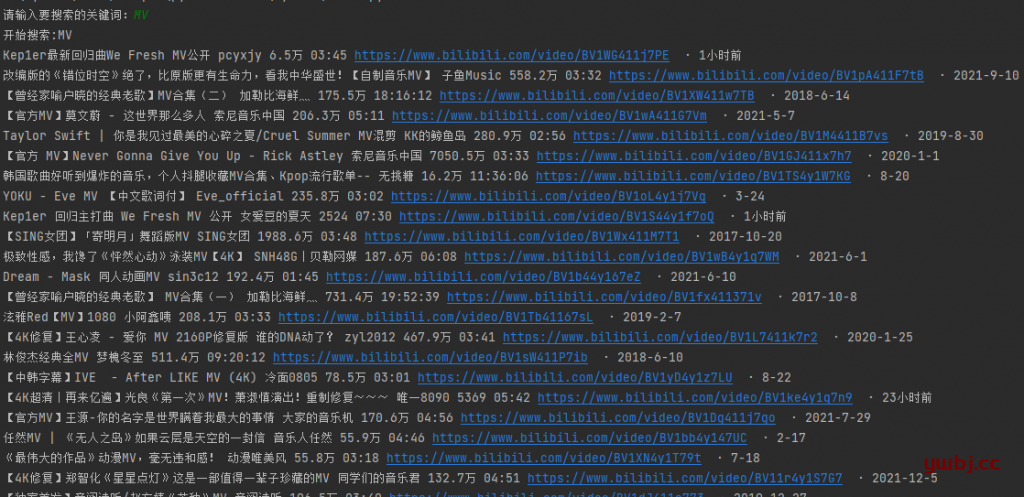

八、最终代码执行效果

这里CSS_SELECTOR路径,我这里尽量的在最底层,所以比较长,因为短路径,经常性等待时间不够长,没有加载所有页面,提取不到信息而报错。

from selenium import webdriverfrom selenium.webdriver.common.by import Byfrom bs4 import BeautifulSoupfrom selenium.webdriver.support.ui import WebDriverWaitfrom selenium.webdriver.support import expected_conditions as ECimport xlwtimport timen = 1word = input('请输入要搜索的关键词:')driver = webdriver.Chrome()wait = WebDriverWait(driver,10)excl = xlwt.Workbook(encoding='utf-8', style_compression=0)sheet = excl.add_sheet('b站视频:'+word, cell_overwrite_ok=True)sheet.write(0, 0, '名称')sheet.write(0, 1, 'up主')sheet.write(0, 2, '播放量')sheet.write(0, 3, '视频时长')sheet.write(0, 4, '链接')sheet.write(0, 5, '发布时间')def search(): driver.get("https://www.bilibili.com/") input = wait.until(EC.presence_of_element_located((By.CLASS_NAME, 'nav-search-input'))) button = wait.until(EC.element_to_be_clickable((By.CLASS_NAME, 'nav-search-btn'))) input.send_keys(word) button.click() print('开始搜索:'+word) windows = driver.window_handles driver.switch_to.window(windows[-1]) wait.until(EC.presence_of_element_located((By.CSS_SELECTOR, '#i_cecream > div > div:nth-child(2) > div.search-content > div > div > div.video.i_wrapper.search-all-list'))) get_source() print('开始下一页:') button_next = driver.find_element(By.CSS_SELECTOR, '#i_cecream > div > div:nth-child(2) > div.search-content > div > div > div.flex_center.mt_x50.mb_x50 > div > div > button:nth-child(11)') button_next.click() #time.sleep(2) wait.until(EC.presence_of_element_located((By.CSS_SELECTOR, '#i_cecream > div > div:nth-child(2) > div.search-content > div > div > div.video-list.row > div:nth-child(1) > div > div.bili-video-card__wrap.__scale-wrap > div > div > a > h3'))) get_source() print("完成")def next_page(): button_next = wait.until(EC.presence_of_element_located((By.CSS_SELECTOR, '#i_cecream > div > div:nth-child(2) > div.search-content > div > div > div.flex_center.mt_x50.mb_lg > div > div > button:nth-child(11)'))) button_next.click() print("开始下一页") #time.sleep(5) wait.until(EC.presence_of_element_located((By.CSS_SELECTOR, '#i_cecream > div > div:nth-child(2) > div.search-content > div > div > div.video-list.row > div:nth-child(1) > div > div.bili-video-card__wrap.__scale-wrap > div > div > a > h3'))) get_source() print("完成")def save_excl(soup): list = soup.find(class_='video-list row').find_all(class_="bili-video-card") for item in list: # print(item) video_name = item.find(class_='bili-video-card__info--tit').text video_up = item.find(class_='bili-video-card__info--author').string video_date = item.find(class_='bili-video-card__info--date').string video_play = item.find(class_='bili-video-card__stats--item').text video_times = item.find(class_='bili-video-card__stats__duration').string video_link = item.find('a')['href'].replace('//', 'https://') print(video_name, video_up, video_play, video_times, video_link, video_date) global n sheet.write(n, 0, video_name) sheet.write(n, 1, video_up) sheet.write(n, 2, video_play) sheet.write(n, 3, video_times) sheet.write(n, 4, video_link) sheet.write(n, 5, video_date) n = n +1def get_source(): html = driver.page_source soup = BeautifulSoup(html, 'lxml') save_excl(soup)def main(): search() for i in range(1,10): next_page() i = i + 1 driver.close()if __name__ == '__main__': main() excl.save('b站'+word+'视频.xls') |

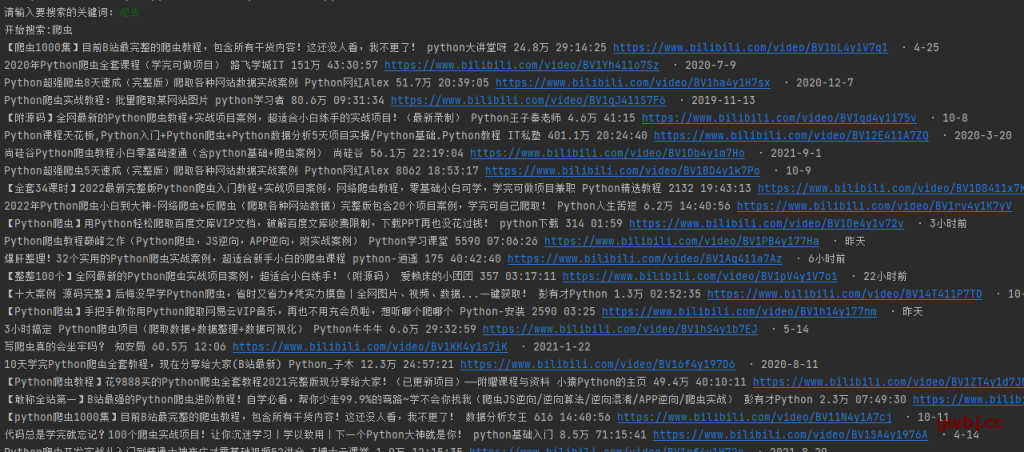

执行输入MV执行结果:

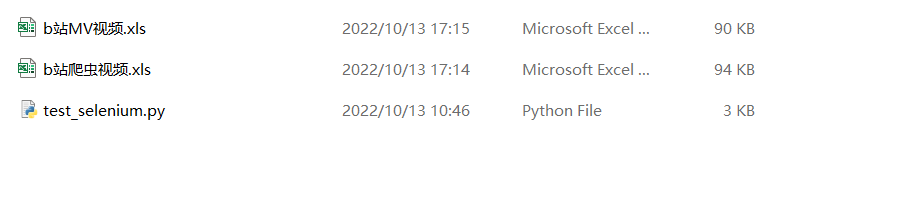

在文件夹也生成了excel文件表

打开,信息保存完成

同理,输入其他关键词,也可以。

以上,简单的爬取搜索信息就完成了,如果要在服务器上隐藏运行,参考我上篇文章:python爬虫之selenium库

打赏作者

Auth:运维笔记

Date:2022/10/13

Cat:

Auth:运维笔记

Date:2022/10/13

Cat: :1,808 次浏览

:1,808 次浏览

~~

~~